The Cultural Shift AI Demands from Engineering Teams

I've been doing this long enough to have lived through a few revolutions. The dot-com bubble. The microservices fever. The Kafka-and-events hype that turned every system into a distributed spaghetti of topics and consumers. Each wave arrived with the same energy: this changes everything, adopt or fall behind.

And each time, the teams that struggled weren't the ones that couldn't learn the technology. They were the ones that couldn't navigate what the technology asked of their culture.

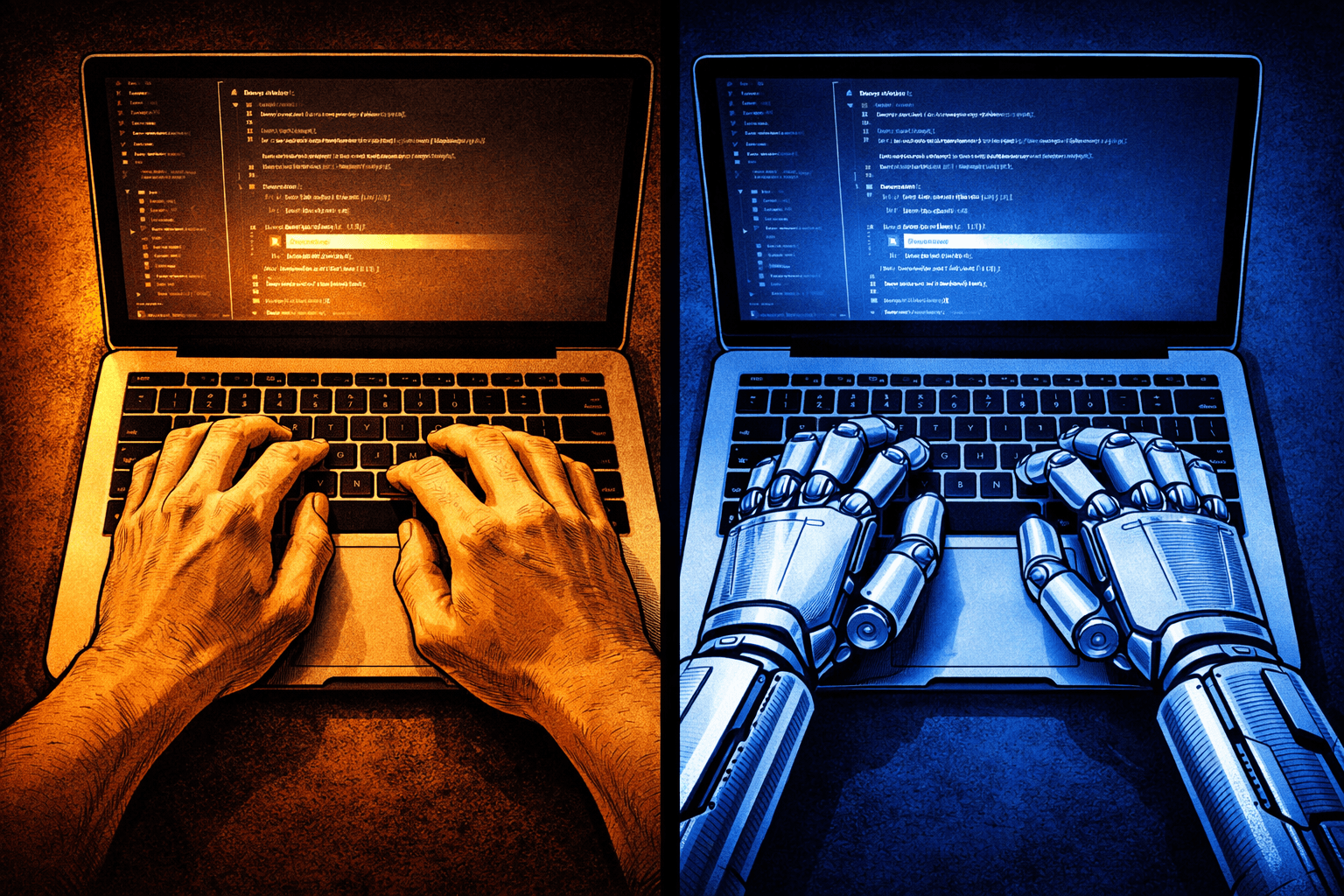

AI is no different. Except for one thing: this time, it doesn't just ask the team to learn new tools. It asks engineers to reckon with their own identity.

The Pattern I Keep Seeing

Every major tech shift I've lived through followed roughly the same arc. First, excitement among the early adopters. Then pressure from the top — why aren't we using this yet? Then a messy middle period where some people are experimenting, others are quietly resistant, and nobody has quite figured out what "doing it well" actually looks like. Eventually, a new normal settles in, and the thing that felt disruptive becomes table stakes.

What determines how painfully teams navigate that middle period isn't technical skill. It's psychological safety. Teams that have built a culture where trying things, failing, and learning from that failure is genuinely accepted — not just lip service — move through the hype curve with far less friction. Teams that haven't, stall.

This is as true for AI as it was for microservices.

The Craft Identity Problem

But AI does add something new to the equation. Unlike moving to an event-driven architecture or adopting a new message broker, AI tools don't just change how engineers work — they partially automate what many engineers have spent years getting good at.

That lands differently.

Most experienced engineers I know have a deep relationship with their own craft. The instinct for clean design, the pattern recognition, the care taken with code that will outlive its author. That knowledge is real and earned. When a junior engineer can generate a working implementation in thirty seconds that would have taken them considerably longer six months ago, the experienced engineer next to them faces a question they didn't expect: if the generation is easy, where does my value live?

Teams that haven't had this conversation explicitly tend to have it implicitly — in the friction during code review, in the subtle status games around "real" work versus AI-assisted work. The culture absorbs the question whether you surface it or not.

Trust Is the Answer — In Both Directions

In my teams, the principle I come back to is trust above all else. Failure isn't something to be avoided — it's something to be learned from. The only failure I don't accept is repeating the same mistake twice because we didn't stop to understand what happened the first time.

That principle matters enormously for AI adoption.

Engineers need psychological safety to experiment badly before they experiment well. The first few weeks of using AI tooling seriously are usually humbling — rubber-stamping suggestions you shouldn't, missing things you'd have caught if you'd written it yourself, wasting time rewriting AI output that didn't quite fit. That's the learning curve. Teams that are afraid to look incompetent skip it, which means they either avoid the tools entirely or adopt them superficially and never develop real judgment about when and how to use them.

Trust has to move in both directions, too. If AI-assisted code is treated with institutional suspicion — if there's an unspoken understanding that it somehow "doesn't count" — engineers will hide their usage rather than develop good practices around it openly. You end up with a covert culture instead of a shared one, which is the worst outcome.

What This Asks of Leaders

If you're leading an engineering team right now, the job isn't to write an AI policy. Policies are how you avoid having the real conversation.

The real conversation is about identity and value: helping your engineers find where craft still lives, and always will. The judgment call before any code gets written. The architectural decision that accounts for the next three years. The review comment that's about design, not syntax. These things don't get easier with AI. They get more important, because everything else just got faster.

Create the conditions for honest experimentation — with the explicit understanding that failing is fine as long as the team learns from it and doesn't repeat it. Model it yourself. If you're experimenting with these tools and something didn't work the way you expected, say so.

I've watched this pattern play out enough times to be confident: the teams that come out the other side in good shape aren't the ones that moved fastest or had the best tooling. They're the ones that stayed curious together and kept enough trust in the room to be honest about what they didn't know yet.

That's not new. It just matters more than ever right now.